The Endless Appetite: Why the AI 'Bubble' Is a Mirage

Brief

Thesis: AI is heading toward the Holodeck — real-time generated environments, not better chatbots. That destination reveals why the bubble comparison is a structural inversion of dot-com, not a repeat.

Angle: Stake the contentious position: we are heading toward generated reality (games, apps, environments rendered on-the-fly). The compute demand for that trajectory is so vast that $100M researcher salaries are rational and calling this a bubble is like calling the power grid a fad.

Framework: Infrastructure evolution — from text prediction to real-time world generation, each step multiplies compute demand by orders of magnitude.

Historical anchor: Jevons Paradox (1865). Dot-com dark fiber (2001).

Recent anchor: Google DeepMind's Project Genie (Jan 2026). DeepSeek's 10x efficiency gain triggering 5,000% usage growth. Hyperscaler capex hitting $602B in 2026.

Key tension: The power grid is the only real constraint. If electricity can't scale, the Holodeck stays science fiction.

The Endless Appetite: Why the AI "Bubble" Is a Mirage

Last month, Google DeepMind launched Project Genie, a system that generates playable, navigable 3D worlds from a text prompt. You type a description, and it renders the environment around you in real time. Walk forward, and it generates the path ahead. Look left, and it builds what's there. Sixty seconds of generated reality at 24 frames per second, running today, in a browser.

It's clunky. It's limited. And it is a prototype of the thing that will eat all the compute we can build.

I'm going to stake a contentious position here: AI is not heading toward better chatbots. It is heading toward the Holodeck. Real-time generated environments: games that build themselves as you play, apps that assemble on the fly for the task at hand, AR overlays that render a personalized world on top of the physical one. Not in some distant science fiction future. On a trajectory we can already trace.

This won't arrive overnight. It will be a graduated build. AR glasses are already here: CES 2026 was flooded with them from RayNeo, XREAL, Lumus, and others, with HDR displays, wider fields of view, and integrated connectivity. Then richer VR environments. Then eventually full-dive generated worlds that respond to you like physical ones do. Each step consumes orders of magnitude more compute than the last.

And those are just the uses we can see. Nobody in 1995 predicted TikTok or Uber. Whatever comes next will dwarf what we're building for today.

I might be wrong about when we get to the Holodeck. I'm not wrong about the direction. And the direction alone unravels the entire "AI bubble" narrative.

Why This Changes the Math

Most people miss this about where AI compute is heading. Text, the thing powering every chatbot conversation you've had, is the lowest-resolution form of intelligence. A single frame of 1080p video consumes roughly 10,000 tokens, the equivalent of 5,000 words. At 24 frames per second, one second of generated video burns through more tokens than a novel.

Today's AI predicts the next word. Tomorrow's AI generates the next frame, and the frame after that, and the one after that, in real time, responsive to your actions. Project Genie is doing this now at 720p for 60 seconds. Scale that to persistent worlds, to hours of play, to the resolution your eyes actually demand, and you begin to see the compute curve we're on.

Can we get there? Maybe. According to Epoch AI, the amount of compute needed to hit a given level of performance has been halving every eight months. That's three times faster than Moore's Law. The AI you use today will be equally capable on a tenth of the hardware within two years. Not because the chips got better (though they do, roughly 2.5x per year), but because the algorithms got smarter (3x per year). Combine hardware gains, algorithmic breakthroughs, and hundreds of billions in infrastructure investment, and we might get there. The fact that all of that progress and all of that money still only gets us a "might" tells you everything about the scale of compute the Holodeck demands.

Now consider what that capability gets pointed at. Video games today are pre-built. Every texture, every building, every NPC is crafted in advance and shipped on a disc or download. What happens when games are generated, when the world doesn't exist until you look at it? When an app isn't downloaded but assembled in real time from your intent? That's not a marginal increase in compute demand. It's thousands of times more tokens per frame, at dozens of frames per second, for every person interacting with the system simultaneously.

NVIDIA's Holodeck already renders photorealistic collaborative spaces. The trajectory is visible. The question isn't whether we'll need a million times more compute. It's whether we can build it fast enough. And the organizations that will capture value from this trajectory are the ones building their AI strategy now, not waiting for certainty.

The One Real Risk

So is there a yellow flag? Yes.

If copper wire was the last mile of 2001, electricity is the last mile of 2026. We have the silicon. We have the algorithms. What we're running out of is power.

The data centers currently announced would consume the safety margin that grid operators require. Not sometimes, but permanently, 24/7. The equivalent of 100 new nuclear plants. Tens of thousands of square miles of solar. Half of Texas worth of wind capacity. This is why Three Mile Island is being restarted. This is why tech companies are building their own power stations. In an arms race, you can't afford to wait for the utility company.

Behind the power grid sits a second gate: the chips themselves. The rare earth elements, the ultra-pure silicon, the advanced lithography machines that only one company on Earth (ASML) can build. Supply chains that thread through geopolitically fragile territory. But without the electrons to power the chips, the chips don't matter. Solve electricity first, then worry about the supply chain.

We know how to generate electricity. We've done it at massive scale before. We know how to mine materials and build factories. But the power grid is the constraint that bites first, and the one variable that could turn an infrastructure surge into a wildfire.

The Present Proof

Even without the Holodeck, the bubble comparison fails on its own terms.

Every bubble has a lie, a thing the majority believes that turns out to be false. The dot-com lie wasn't that the internet was useless. It was infrastructure. Between 1996 and 2001, companies spent $500 billion laying fiber optic cable (thirteen new cross-country routes, cables under the ocean) except nobody spent a dollar on the last mile. The copper telephone wire from each home to the nearest fiber hub carried 100,000 times less data than the glass feeding it. Pages took 30 seconds to load. Going online killed your phone line. Remember the family fights? [Aside: If you remember the Cambridge coffee pot, where Cambridge students streamed a webcam of their coffee maker in 1991 to create what became the first viral video, you remember the dream. The plumbing just couldn't deliver.]

By 2001, 90% of that fiber sat unlit. Dark fiber. Billions in glass with nothing to carry. The telecoms didn't just fall. They went to zero.

Now look at AI. There is no dark silicon. Nvidia commands 97% of the data center GPU accelerator market and reported $39.1 billion in data center revenue in a single quarter, up 73% year over year. Demand for GPU compute has outpaced traditional server demand by more than 2x. Not a single chip is sitting idle. They're running around the clock, at such high capacity that occasionally one melts.

| | Dot-Com (2001) | AI (2026) | |---|---|---| | Infrastructure | Fiber optic cable | GPU compute chips | | Utilization | 90% dark (unused) | 0% dark (at capacity) | | Bottleneck | Last mile (copper wire) | Power grid (electricity) | | Economics | Speculative overbuild | Supply-constrained demand |

The 2001 bubble was supply-rich, demand-poor. AI is supply-starved, demand-rich. These are not the same crisis wearing different clothes. They are structural inversions of each other.

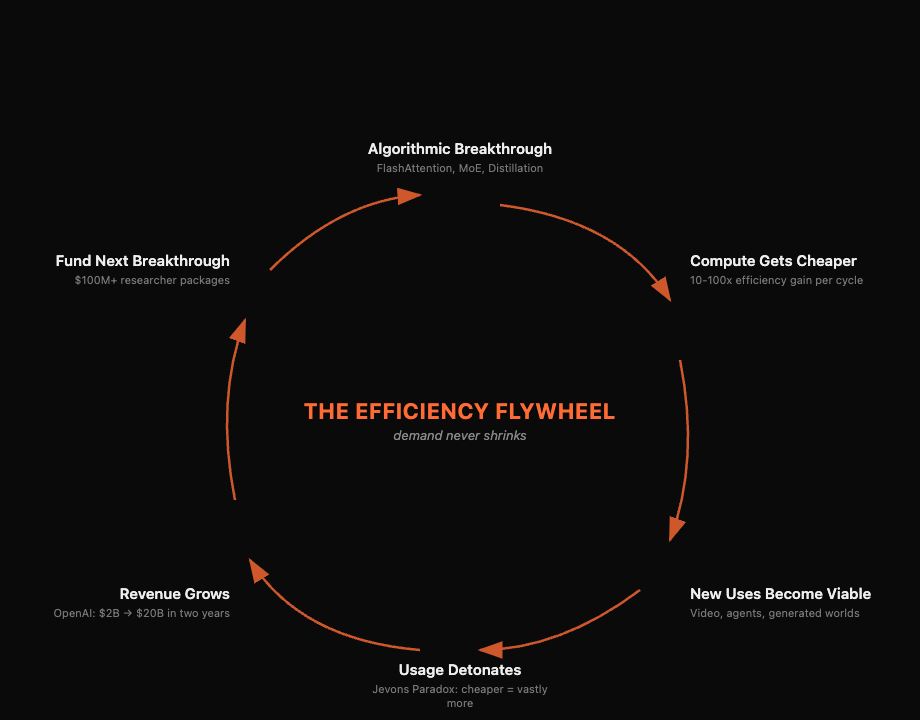

Efficiency Feeds the Appetite

I know, I know. "But DeepSeek!" A Chinese firm produced a high-performing model using a tenth of the usual compute. Nvidia lost nearly $600 billion in market cap in a single day, the largest one-day loss in US stock market history. The narrative: if AI gets more efficient, we won't need all those data centers.

This misreads how efficiency actually works in AI.

Models keep getting cheaper and more powerful through algorithmic breakthroughs: smarter architectures that activate only a fraction of their parameters per query, better memory access patterns that squeeze more speed from the same hardware, distillation techniques that compress a data-center-scale model into something that runs on a laptop. The cost of frontier inference has been dropping 5-10x per year. DeepSeek's efficiency breakthrough was not an anomaly. It was the trend, arriving on schedule.

But here's what the market missed on that $600 billion panic day: cheaper doesn't mean less. It means vastly more.

William Stanley Jevons documented this in 1865 with coal: as steam engines became more efficient, coal consumption exploded because new uses emerged. Whale oil lit one lantern per wealthy household at over $1,000 a year in today's money. Kerosene dropped the cost 90%. Suddenly every person had their own lamp. Electricity made it cheaper still, and now we have more lights than we know what to do with.

AI is following the same pattern. In the year after the DeepSeek moment, usage didn't grow by percentages. It grew by multiples. Every time inference gets cheaper, applications that were previously uneconomical become viable: real-time video generation, always-on AI agents, personalized tutoring for every student, generated worlds. Each new use case consumes as much compute as the last generation's entire workload. Efficiency doesn't shrink the appetite. It feeds it.

The bears will point out, fairly, that AI infrastructure spend outpaces AI revenue. In 2026, hyperscalers are projected to spend $602 billion in capex, roughly 75% of it on AI. That is a staggering number.

But look at the revenue curve. OpenAI tripled to $20 billion ARR in 2025, up from $2 billion just two years prior. Anthropic went from under $1 billion to $9 billion ARR in a single year. Microsoft's AI revenue hit an estimated $25 billion, growing 175% year over year. Google Cloud grew 48% to $17.7 billion in a single quarter. The gap is real, but the revenue curve is steeper than cloud was at the same stage. AWS was a money pit before it became Amazon's profit engine. Build the capacity; the applications follow. The difference this time? The applications aren't waiting for the capacity to be built. They're already consuming every GPU-hour available.

So is AI a bubble? If you believe the destination is better chatbots, maybe. If you see where the trajectory points, toward generated reality, toward the Holodeck, then frankly, calling this a bubble is missing the forest for the trees.

The dot-com was a green field. Fragile seedlings, nobody sure which would grow. AI is not a green field. It's the existing forest getting taller. The same companies, the same cables, the same devices. The redwoods (Google, Microsoft, Meta, Amazon) aren't planting new trees. They're extending their canopy. Alphabet just pledged $175-185 billion in AI infrastructure for 2026 alone, more than double last year. That's not speculation. That's a company with $400 billion in annual revenue doubling down on proven demand.

If you're looking for a bubble, look at the seedlings: the thousands of AI startups that will burn through venture capital and die. But their death isn't evidence of a dying forest.

The appetite is real, and it is endless. The forest hasn't stopped growing. The only question is whether we can keep the lights on. Wouldn't that just be something.

Links & Resources

- Google DeepMind Project Genie — Real-time world generation from text prompts

- NVIDIA Holodeck — Photorealistic collaborative VR

- Algorithmic Progress in Language Models — Epoch AI research: compute halving every 8 months

- The Price of Progress — Inference cost dropping 5-10x per year

- Epoch AI Trends — Training compute growing 5x/year since 2020

- Situational Awareness — Leopold Aschenbrenner's analysis of AI compute trajectories

- Hyperscaler Capex $602B in 2026 — IEEE ComSoc

- Tech AI Spending Approaches $700B — CNBC, Feb 2026

- OpenAI Triples to $20B ARR — Revenue growth trajectory

- Jevons Paradox — Why efficiency increases consumption

- The Trojan Room Coffee Pot — The first webcam and viral video

- Dark Fiber — The unused backbone of the dot-com era

- DeepSeek — The efficiency breakthrough that spooked markets

- FlashAttention — Tri Dao's memory-access optimization, now in every major model

- FlashAttention-3 Blog — Latest iteration and technical deep dive

- DeepSeek-V3 Technical Report — Mixture of Experts architecture: 671B params, 37B active

- DeepSeek-V3 Cost Analysis — $5.6M training cost breakdown

- DeepSeek-R1 Distillation — 671B model compressed to 7B with retained reasoning